“We make body image issues worse for one in three teen girls,” was a statement from a 2019 research report produced by Facebook on the impacts of Instagram (which is owned by Facebook) on teen mental health. This is just one of the many items we learned from the documents and testimony provided by a Facebook whistleblower to the Wall Street Journal, 60 Minutes and Congress. Less than a week later, in countries all over the world for six hours people couldn’t talk to loved ones, conduct business or – in places like Afghanistan and Syria – coordinate travel to escape life or death situations due to a Facebook outage that also took down Instagram, WhatsApp, and Messenger (all also owned by Facebook).Then during the aforementioned congressional hearings, elected officials demonstrated their lack of understanding of technology that has been around for almost two decades.

All these events sparked outrage across the political spectrum, but these passions failed to coalesce around what we should do about it. Getting the answer to this question – what we should do about social media right now – matters, because the technological change we are going to see in the next 30 years is going to make the tech explosion of the last 30 years look insignificant. We must be prepared to take advantage of technology before it takes advantage of us. If a simple algorithm that uses basic artificial intelligence (AI) to show you pictures and text based on your previous preferences is influencing suicide rates in teens and sparking an unprecedented level of political contempt leading to riots and insurrections, then what is going to happen when more powerful tools like artificial general intelligence (AGI), a system that is smarter than the smartest human at most tasks, becomes ubiquitous?

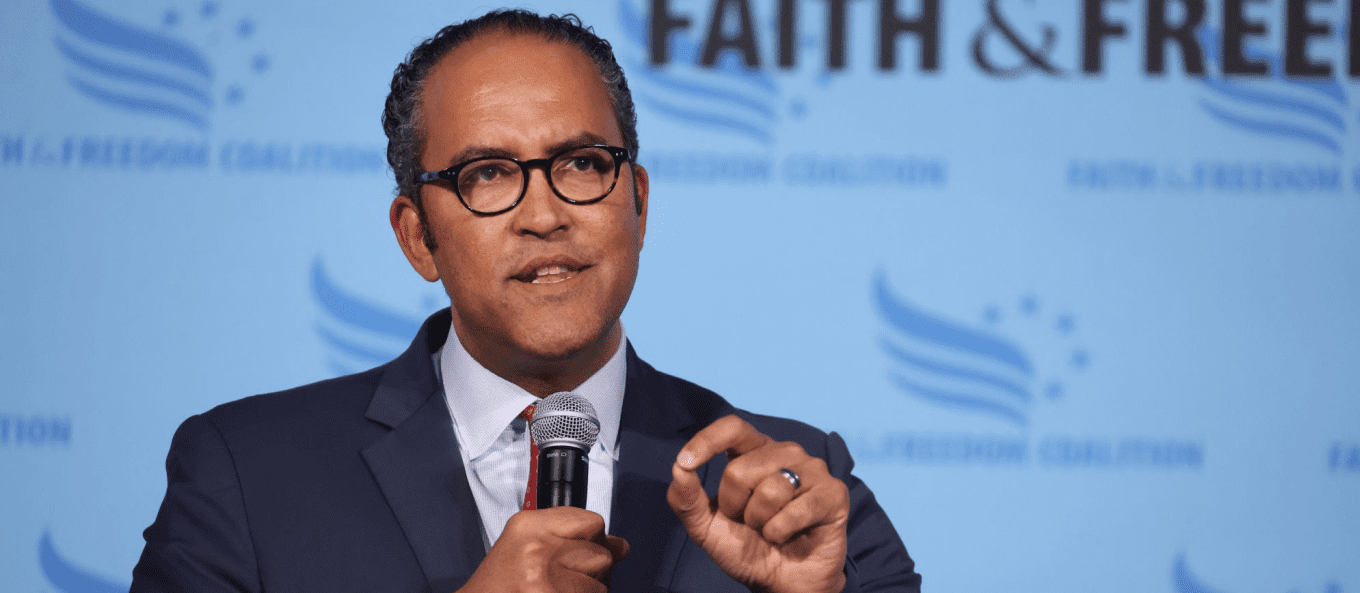

The dangers of social media co-exist with the dangers of asking elected officials to regulate something they don’t fully understand.

At the core of this problem is the need to answer a simple question – how can society ensure that basic human values like fairness are encoded into these systems? This simple question doesn’t have a simple answer, but as we debate this question there is something we can do right now. We need explainable AI that allows humans to understand the reasons an algorithm produced a particular result. We need to know how and why AI decided to do something and these results need to be made public. Many AI systems are seen as black boxes where an expert might be able to explain how an AI system works in theory but are unable to explain how the system reached a specific decision at a very granular level. Being able to explain why, for example, the Facebook algorithm showed the Facebook user a particular post will foster more transparency on how companies are using these powerful tools and lead us to solutions on how to improve outcomes of these systems.

The dangers of social media co-exist with the dangers of asking elected officials to regulate something they don’t fully understand. The federal government needs to streamline hiring practices so men and women with experience in technology can loan their skills to the public sector as staffers or advisors for a few years before going back into academia or the private sector. To ensure the government gets technology regulation right, we need to prioritize an understanding of technology as a prerequisite for elected office. This also means we need more people running for office that understand technology. There are opportunities to make this happen. According to BallotReady, 70% of races in 2020 were unopposed. There are about 75,000 upcoming elections on the state, local, and federal level and companies like Snapchat are making it easier to expand the number of Americans who consider running in local elections.

We need to put down our devices, go outside and rediscover our humanity.

Until we have smarter elected officials implementing smarter legislation, this growing “techlash” of strong negative backlash against big technology companies will continue to snowball because of a perception that these companies are putting profit over safety. But as Scott Pelly stated in his 60 Minutes interview of the Facebook whistleblower, social media is “amplifying the most negative aspects of human behavior.” What does this tell us about ourselves? Are we going to let technology take advantage of our most base instincts? Or are we going to take advantage of technology to do things like enable humans to live longer and healthier lives, make nations safer and more secure, and use our natural resources more efficiently? Achieving the latter starts in a rather counterintuitive way – we need to put down our devices, go outside and rediscover our humanity.

For learn more about AI and Ethics, click here.

For some deep thinking on Artificial General Intelligence (AGI) go here.

For thoughts on how we need to change our strategy with our adversaries like China, click here.

First time reading? If you want rational takes on foreign policy, politics and technology then sign up below for “The Brief.” It’s a twice a month email on things that aren’t being discussed but should – all in 5 minutes or less. You can register below.